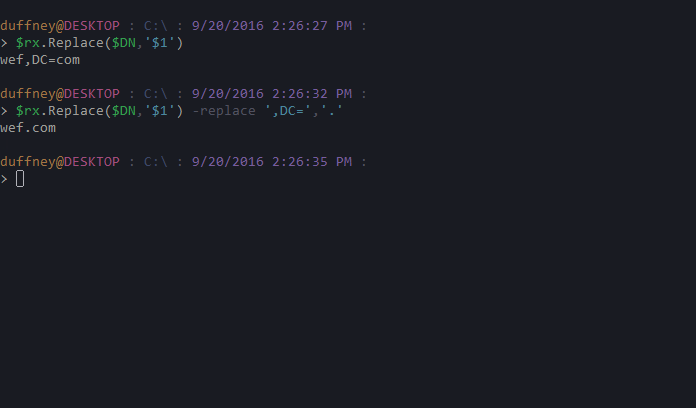

For example : from nltk.tokenize import word_tokenize For example, commas and periods are taken as separate tokens. It splits tokens based on white space and punctuation. NLTK provides a function called word_tokenize() for splitting strings into tokens (nominally words). NLTK provides the sent_tokenize() function to split text into sentences. NLTK script to download required text data : import nltkĬommand to download NLTK required text data : python -m nltk.downloader allĪ good useful first step is to split the text into sentences. Tokenization and Cleaning with NLTKĬommand to install the NLTK library : sudo pip install -U nltk Now, let’s have a look at some of the tools in the NLTK library that offer more than simple string splitting. Apple the company vs apple the fruit is a commonly used example). This means that the vocabulary will shrink in size, but some distinctions are lost (e.g. It is common to convert all words to one case. Re_print = re.compile('' % re.escape( string.printable)) We can filter out all non-printable characters by selecting the inverse of the string.printable constant. Sometimes text data may contain non-printable characters. Contractions like What’s have become Whats but armour-like has become armourlike. We can see that this has had the desired effect, mostly. Re_punc = re.compile('' % re.escape(string.punctuation)) Output can use regular expressions to select for the punctuation characters and use the sub() function to replace them with nothing. Python provides a constant called string.punctuation that provides a great list of punctuation characters. One way would be to split the document into words by white space (as in the section Split by Whitespace), then use string translation to replace all punctuation with nothing (e.g. We also want to keep contractions together. We may want the words, but without the punctuation like commas and quotes. Split by Whitespace and Remove Punctuation ** : This time, we can see that armour-like is now two words armour and like (fine) but contractions like What’s is also two words What and s (not great). thought.), which is not great.Īnother approach might be to use the regex model (re) and split the document into words by selecting for strings of alphanumeric characters (a-z, A-Z, 0-9 and ‘_’). We can also see that end of sentence punctuation is kept with the last word (e.g. We can observe that punctuation is preserved (e.g. We can do this in Python with the split() function on the loaded string. # manually load text data from fileįile = open(filename, 'rt') # read file as textĬonverting the raw text into a list of words. A very simple way to do this would be to split the document by white space, including ” ” (space), new lines, tabs and more. Tools like NLTK will make working with large files much easier. This will not always be the case and you may need to write code to memory map the file. ** Note : The text dataset we have chosen is small and will load quickly and easily fit into memory. Let’s load the text data so that we can work with it. We could just write some Python code to clean it up manually, and this is a good exercise to start with. Text cleaning is hard, but the text we have chosen to work with is pretty clean already. Once you get a hold on your text data, the very first step in cleaning up text data is to have a strong idea about what you’re trying to achieve, and in that context review your text to see what exactly might help. Metamorphosis by Franz Kafka Plain Text UTF-8 (may need to load the page twice).

We will use the text from the book Metamorphosis by Franz Kafka. The kind of text preparation methods you need to use, depends on the your NLP task. You must clean your text first, which means splitting it into words and handling punctuation and case, etc. You cannot go straight from raw text to fitting a machine learning or deep learning model.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed